Machine learning is becoming an integral part of every aspect of our lives. As these systems’ complexity grows, and they take many more decisions for us, we need to tackle the biggest barrier to their adoption ⚠️; “How can we trust THAT machine learning model?”.

It doesn’t matter how well a machine learning model performs if users do not trust its predictions.

Building trust in machine learning is tough. Loss of trust is possibly the biggest risk that a business can ever face ☠. Unfortunately, people tend to discuss this topic in a very superficial and buzzwordy manner.

In this post, I will present why it is difficult to build trust in machine learning projects. To gain the most business value from the model, we want stakeholders to trust it. We want to provide defensive mechanisms to avoid problems impacting stakeholders and to build developers’ trust in the product.

Trust needs to be earned, and gaining trust is not easy especially when it comes to software. To gain that trust you want the software to work well, change according to your needs and not break doing so. There are entire industries that do “just that”. Understanding whether a code change may negatively affect the end user, and fixing it in time. A project with machine learning is even tougher for the following reasons:

It’s also perhaps important to state upfront that building trust is hard and often requires a fundamental change in the way systems are designed, developed, and tested. Thus we should tackle the problem step by step and collect feedback.

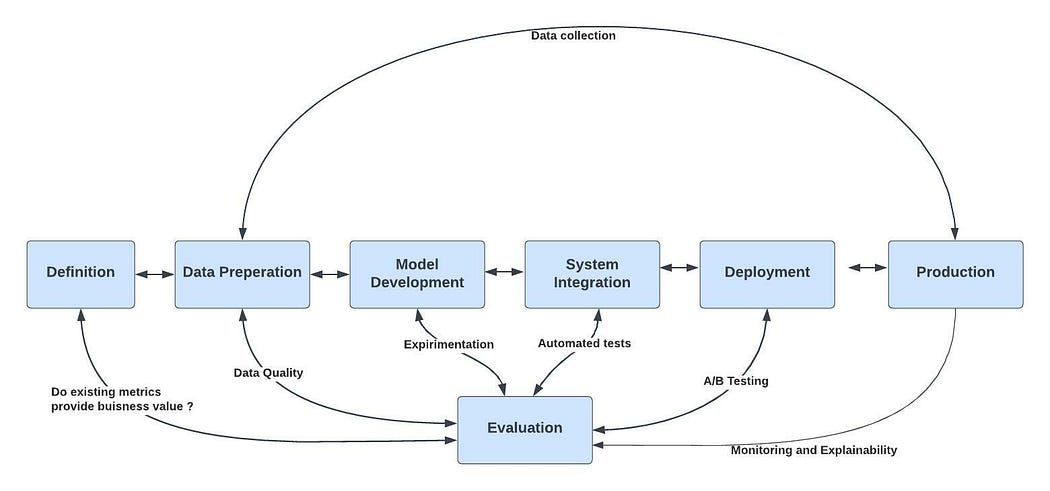

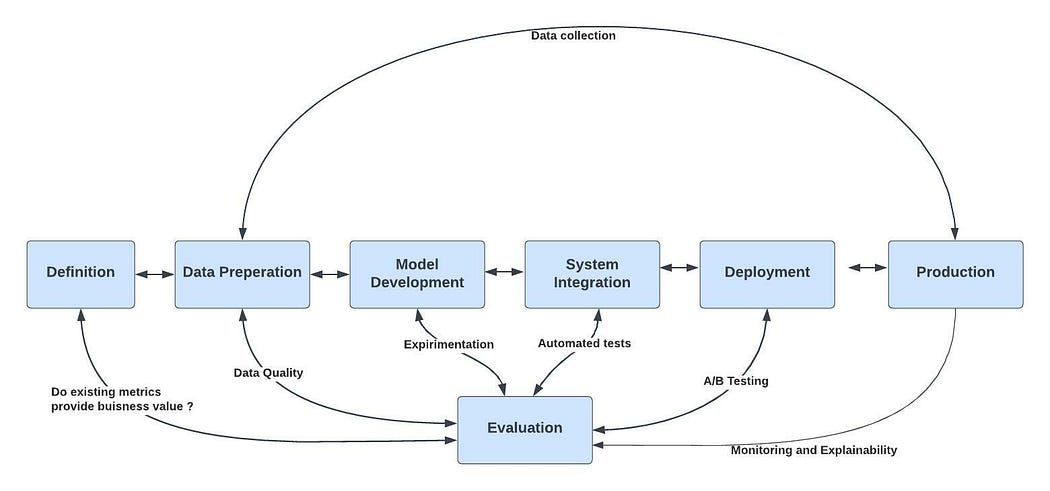

We will cover every step in the machine learning life cycle and what mechanisms we need to harness to improve trust ♻️:

The following flow chart summarizes the defensive mechanisms in each step in the machine learning lifecycle.

The machine learning flow of TRUST

Pro Tip #1🏅remember it’s a journey! You should focus on what hurts you the most and aim for incremental improvements.

Pro Tip #2🏅clear and cohesive communication is as significant as the technical “correctness” of the model.

The first thing we want to do is define the success criteria, so we can make sure we are optimizing the right things.

Like every software project, you can’t tackle a problem effectively without defining the right KPIs 🎯. A KPI or metric does not suit every scenario, so you and the stakeholder must be aligned on the merits of each one before choosing it. There are a bunch of popular metrics one can choose from. Those are divided into two kinds of metrics, both model metrics, and business metrics. Choosing the right metrics is an art.

“The most important metric for your model’s performance is the business metric that it is supposed to influence” — Wafiq Syed

Deciding these metrics should be done before we do any work. These decisions on metrics and KPIs will have a HUGE impact on your entire model life cycle. From model development, their integration, and deployment, as well as monitoring those in production and data collection.

Warning #1 ⚠️don’t take this step lightly! Choose your model metrics and business metrics wisely based on your problem.

Warning #2 ⚠️ the “perfect” metric (ideal fit) may change over time.

Pro Tip #1🏅use simple, observable, and attributable metrics.

Pro Tip #2🏅collect the metrics early on. No one enjoys grepping strings in logs later!

The next step toward building trust is to take care of our data. As the famous quote says “garbage in, garbage out“ (💩+🧠= 💩)

Data is a key part of creating a successful model. You need to have a high degree of confidence in your data, otherwise, you have to start collecting it anew. Also, the data should be accessible otherwise you won’t be able to use it effectively. You can use tools such as soda, great-expectations, and monte-carlo.

“Invalid data will result in flawed models. This in turn will affect downstream services in uncontrolled (and almost always negative) ways.” — Kevin Babitz

Gathering requirements and putting proper validations in place requires some experience 🧓. Data quality comes in two forms.

Intrinsic measures (independent of use-case) like accuracy, completeness, and consistency.

Extrinsic measures (dependent on use-case) like relevance, freshness, reliability, use-ability, and validity.

Warning #1 ⚠️ data quality never get enough attention and priority 🔍.

Pro Tip #1🏅basic data quality checks will take you far🚀. The most prominent issues are missing data, range violations, type mismatch, and freshness.

Pro Tip #2🏅You should balance validation from different parts of the pipeline. Checking the source can catch more sneaky issues, exactly when they happen. But its reach is limited as you can only examine that specific source; instead you can examine the outputs in the end of the pipeline to “cover more space”.

Pro Tip #3🏅after basic data quality checks look how top tech companies approach data quality.

Pro Tip #4 🏅after basic data quality checks you can take care of data management.

The next step toward building trust is the model development phase.

Machine learning is experimental by nature 🏞️. You should try different features, algorithms, modeling techniques, and parameter configurations to find what works best for the problem. Since the machine learning life cycle tends to be costly and long until adoption we should aim to identify problems as early as possible.

You won’t be able to catch all the problematic behavior of your model. No software is bulletproof, and machine learning code is even tougher. But, surely you should cover everything you can using offline checks.

Pro Tip #1🏅use experiment tracking systems such as ClearML or MLflow to track all your models’ parameters you used.

Pro Tip #2 🏅when dealing with sensitive environments a policy layer might be handy. That layer adds logic on top to your predictions to “correct” them. This can be extremely useful for anomalous events.

Pro Tip #3 🏅keep good hygiene habits. Use hold-out validation, cross-validation, and sampling techniques.

Pro Tip #4 🏅measure training, business, and guardrail metrics on the entire dataset and predefined segments.

Pro Tip #5 🏅due to the dynamic nature of ML, testing is even more critical.

Warning #1 ⚠️ be wary of data leakage.

Warning #2 ⚠️ be wary of overfitting and underrating.

The next step toward building trust is continuous integration. In this step, we connect the model training code to the rest of the machine learning pipeline to create our release candidates.

In classical software, things break and we need to protect ourselves. One of the classic ways to protect ourselves is CI. We run all our tests including sanity tests, unit-tests, integration tests, and end-to-end tests 🏗️.

In machine learning, integration is no longer about a single package or a service. Machine learning is about the entire pipeline. From model training, validation, and generation of the potential models but on a different scale and hopefully automatically.

In addition, we should aim for testing in production. This is a somewhat new concept when it comes to machine learning. You are testing a model’s ability to predict a known output or property on the output.

To get the desired artifacts, we need to make our entire pipeline reproducible. It’s tough though. We need to reduce randomness (seed injection) and keep track of the model parameters (data, code, artifacts) used. You can use experiment tracking systems like ClearML or MLflow.

Pro Tip #1🏅google wrote an awesome guide about how to perform continuous integration in machine learning projects.

Pro Tip #2🏅reproducibility is key.

Pro Tip #3🏅do proper code review.

Warning ⚠️ choosing to automatically retrain the models in each CI depends on the lift it will give your model and what it will cost your company. You can mitigate the risk using a circuit breaker and retrain only in certain criteria.

The next step toward building trust is deployment. In this step, our release candidates see some production traffic until they are “worthy” of replacing the existing models.

Once we passed the system integration we already have our set of training artifacts. Those models were lifted in terms of model metrics and thus have the potential to give a lift in terms of our business metrics.

Our goal is to evaluate our potential candidates. We will run experiments between the candidates themselves and the current model 🧪. We will choose the one that best fits us. You can achieve this using the following mechanisms:

After we see that we gain enough lift in production with high probability, we continue to use the best model in production on all the traffic. Unfortunately, you won’t always see enough lift in real life.

Often you will not see enough lift, or even see degradation in business metrics. In those cases, you should roll back to your older models 🔙. To do so you will need to keep versions of your models and keep track on what was the latest version using the model registry.

Pro Tip #1🏅you can use a few deployment mechanisms together.

Pro Tip #2🏅hope for the best and prepare for the worst. You should always have a rollback plan, even if it’s manual.

Warning ⚠️ screwing up an experiment is easy.

The next step toward building trust is making sense of production traffic. We will monitor our models and provide an interpretation to our model predictions.

It’s easy to assume that once your models are deployed they will work well forever, but that’s a misconception 😵💫. Models can decay in more ways than conventional software systems, not only due to suboptimal coding. Monitoring is one of the most effective ways to understand production behavior and stop degradation in time.

In classical software, we would write logs, keep audits, and track hardware metrics such as RAM, CPU, etc 🦾. Also, we would like to track metrics of the usage of our application such as the number of requests per second 📈.

In machine learning applications, we need everything that classical software uses, and more. We need to track the statistical attributes of the input data and the predictions 🤖. In addition, each segment may behave differently. Thus, we need the ability to slice and dice different segments 🍰.

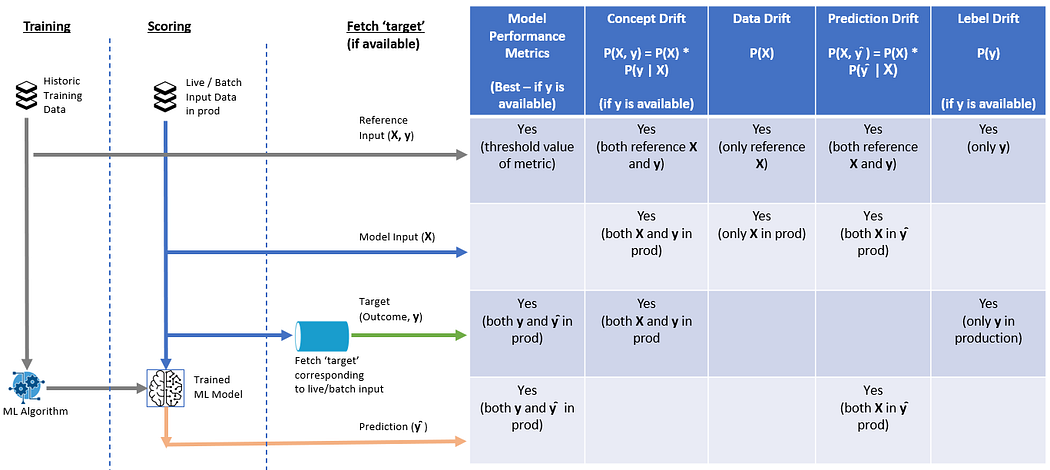

The most common degradation we should monitor is drift (several kinds of drift) :

Each kind of drift finds different issues and uses different information.

In addition, in many scenarios you should monitor these as well:

There are many tools such as fiddler-ai, arize, and aporia which can help you do just that. You can delve into the difference between these in this blog post.

Pro Tip #1🏅ask stakeholders for their worst case and put those fears into metrics accordingly.

Pro Tip #2🏅aim to write stable tests. Let the tests work for you and not the opposite.

Pro Tip #3🏅practice good monitoring hygiene, such as making alerts actionable and having a dashboard.

Warning #1⚠️ be wary of alert fatigue.

Warning #2⚠️ be wary of gradual changes and plan your alerts accordingly.

Warning #3⚠️ don’t focus on bias, and anomaly detection unless its critical to your scenario.

The next step toward building trust is to make stakeholders understand model predictions using model interpretability.

Models have been viewed as a black box 🗃, due to their lack of interpretability.

interpretability is to give humans a mental model of the machine’s model behavior

This is particularly problematic in cases where the margin of error is small❗. For example, some clinicians are hesitant to deploy machine learning models, even though their use could be advantageous. Interpretability comes at a price 💰. There is a tradeoff between model complexity and interpretability. As an example, logistic regression can be quite interpretable, but it might perform worse than a neural network.

There are both global, cohort, and local explainability:

Most of the tools such as lime and shap support all of these🔧🔨.

Pro Tip #1🏅you should start with an interpretable model that makes debugging easier and it might be good enough.

Pro Tip #2🏅keep tough predictions you understand, and use them as a sanity check, on future model development and CI.

The next step toward building trust is to avoid degradations and even provide improvements. To do so we will collect new data points and use them later on to retrain next time.

As we saw earlier, models decay and after a while they become useless 💩.

As new patterns and trends emerge the model encounters more and more data points that it has not seen at the training stage👨🦯. Some of these turn into errors, it can easily add up over time and you’ll find your company losing money fast.

To deal with it, you should feed your models with newly labeled data. You should use monitoring to identify which models you should retrain, and when you should retrain your models ♾️. Labeled data can take many forms. In some cases, you will get the ground truth in delay, only a portion of the labeled data (due to bias), or no ground truth at all 🔮.

The criteria for model retraining depend on the lift it will provide your model and what it will cost your company. It’s a tradeoff between human resources, cloud costs, and business value ⚖️.

Warning #1 ⚠️ there are many challenges ahead including data quality, privacy, scale, cost, and more.

Warning #2 ⚠️ model will eventually degrade as the world changes. Prepare for it.

Pro Tip #1🏅collect only the labeled data you need. No one is brave enough to delete a huge chunk of unneeded data.

Pro Tip #2🏅the amount of automation you got from system integration directly impacts the amount of retraining needed.

In this article, we began by explaining why it’s difficult to trust machine learning models. I have created a flow chart that summarizes the defensive mechanisms in each step. I call it “ The machine learning flow of TRUST ”.

I hope I was able to share my enthusiasm for this fascinating topic and that you find it useful. You’re more than welcome to drop me a line via email or LinkedIn.

Thanks to Jad Kadan, Almog Baku and Ron Itzikovitch for reviewing this post and making it much clearer.